After I wrote about Getting Things Done last week I began to think how the GTD approach could work for developers working in a Scrum Team.

There’s no doubt in my mind that scrum is one of the best working processes for software development teams (yes there are others but scrum is incredibly prevalent and many teams across the world have a lot of success with it). However, having an effective scrum process does not guarantee that developers are productive or that they remember to complete the myriad of other day to day work tasks.

But let’s talk firstly about why GTD can help developers and why it’s not just another process to follow. Have you ever turned up to a meeting and had that sinking feeling when you realise you’ve forgotten to do your actions? Have you ever forgotten your timesheet? What about those times when you have tried to get your head down to write code but can’t quite forget all the other jobs miscellaneous jobs which you have to finish before the end of the day? This is because there are many tasks associated with your role which do not relate to the product or the team.

As well as writing code we ask our developers to complete an endless array of other tasks such as regulatory training, end of year reviews, interviews, timesheets, and other initiatives. These, in my humble opinion, have no place on a Sprint Backlog. They’re not for the value of the team or the but they do detract time from the sprint. Annoyingly, we we don’t provide a mechanism for developers or testers to track these if we’re not using the board – we just expect them to “be organised” and somehow find that time during a planning session. This isn’t fair and it isn’t good enough.

Our goal has to be to assist developers with the tasks their job requires which don’t fit nicely into a sprint format.

Before we go any further I’d like to point out that GTD and Scrum are not entirely unrelated ideas. The five steps of GTD are:

- Capture

- Clarify

- Organise

- Review

- Engage

These match up almost perfectly with their scrum equivalents of:

- Requirements gathering

- Backlog refinement

- Planning

- Sprint Reviews and Retrospectives

- Delivering Value

David Allen, the creator of GTD, suggests reviewing your entire project list every week to ensure actions are kept up to date and nothing is missed. This process draws many parallels to a team reviewing its progress against a larger goal in a Sprint Review.

Implementing GTD for Developers

But how would you go about implementing GTD for a developer without treading on the scrum process? The first thing to realise is that the two frameworks manage work for different areas. Scrum is about product development, GTD is about managing your personal workload. There’s a crossover, where you’re working on work specifically for the product (hopefully a significant part of your day), but there are also significant areas where both work independently.

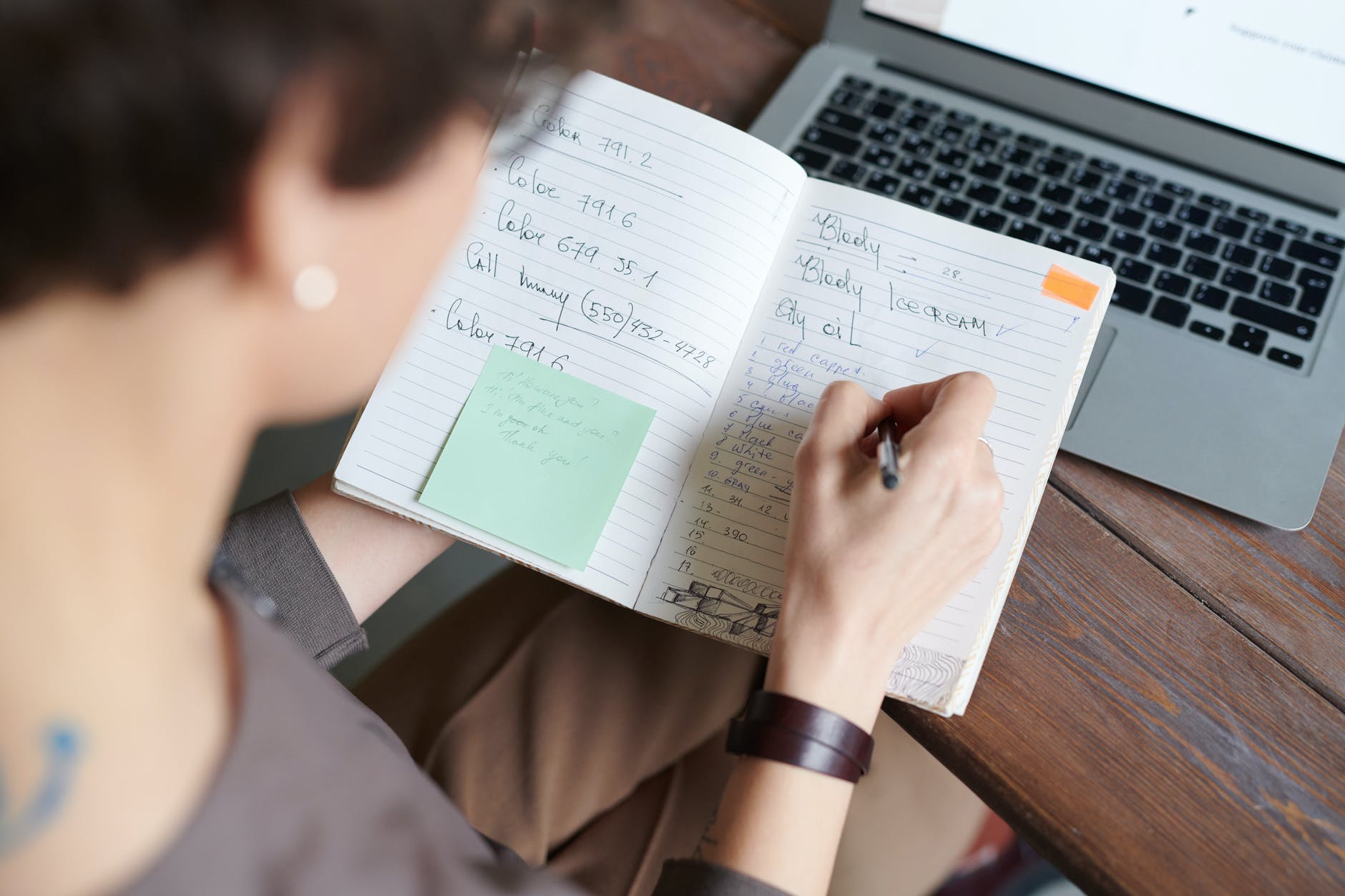

Capture Everything

The first step, as with pure GTD, is to capture everything. This can be on paper, on your computer, or in a notetaking app. This list will form the basis of your in tray and should contain everything which is in your head or on your desk at the moment. You can limit it to work, but personally I’d recommend including your personal life too.

Your list may include items like:

- Login functionality

- Potatoes

- Billy’s Feedback

- Hair Cut

- Boss’ email

- Metrics

One of the key tenants of GTD is to move all these items out of your head and into an inbox. Free up your brain for making decisions and having ideas not for storing stuff which would be better suited to a piece of paper. Chances are the first time you run through this you will end up with hundreds of items on this list, far more than you ever expected! If you find yourself slowing down take a look at the GTD Trigger List to see if you can tease out any more and I’m sure you’ll think of a few you’ve forgotten.

Don’t be intimidated by the sheer number of items you’re finding now. They’re already in your head, the only difference is now you’re seeing that list for real. If we can visualise it, we can throttle the number of items in progress – just like you would on a scrum board.

Going forward you’re going to capture items as soon as you think of them you don’t have the chance to forget them (remember what we said about remembering actions from meetings?). This list should never grow this large again.

Capturing Actions From Email

Email can be a particuarly tricky one so I’ll cover that one specifically. I recommend creating three folders:

- Action Required

- Waiting For

- Archive

I’ll explain about Waiting For later on but I want you go through your inbox and move every single email into either Action Required (you need to do something with this) or Archive. If you added it to the first folder, put it on your list.

Your goal should be to repeat this process at least daily. Remember your inbox is just that – it’s an in box. You should empty your inbox and decide what to do with it (not necessarily action it) as often as is reasonably possible.

Clarify

Once you’ve constructed your list from your mind, various postit notes, your scrum board, and your inbox you’ll have a lot of work on your hands. I’m expecting you to have well over a hundred items. However, this list makes sense to you now but it won’t after a days (or even hours). We’re not going to complete these items all at once and we need to know that whenever we come back to then will still be clear to us. We want to record actions, things we are going to do – not just vague bullet points.

- Implement login functionality

- Buy potatoes

- Reply to Billy’s email requesting feedback

- Call hair dresser to confirm appointment

- Reply to boss’ email and answer question

- Check metrics on live site are within expected range

Note that all of these are now specific things for you to do, not vague points designed to remind you. David Allen (the creator of Getting Things Done) teaches us that one of the biggest causes of procrastination is not knowing what the next action or decision should be. Save your future self from that frustration by deciding what is actually needed right now, before you file it away to be done in the future.

Organise

GTD uses projects to organise work. The definition of a project is very broad.

“Projects are defined as outcomes that will require more than one action step to complete and that you can mark off as finished in the next 12 months.”

–David Allen

We’re going to use the actions list you captured to create your project list. I would recommend you keep a couple of high level projects to group various pieces of work together. I use:

- Home

- Work

- Personal Development

However you should choose the ones which seem most appropriate to you. Within each of these I would create a project called One Off Actions.

Next you’re going to work your way down your inbox and create and organise as you go. The question you should keep asking yourself is whether your task is part of a wider project or if it’s a one off action. At this point you should also make a note of any deadlines for any of your actions.

Organising Actions Which Relate to Scrum Tasks

Many of the actions you have will relate to work you are currently undertaking on behalf of the scrum team. Code this, Code Review that, etc… There are a couple of ways to approach this. You could either create a duplicate item on your own system to track your work on the scrum board or you could ommit these from your personal system.

There are advantages to both. Having work listen on both makes it less likely you’ll lose track of something however at the expense of organisational overhead and keeping things in sync.

Personally I would recommend that you don’t track work you are doing as part of the scrum team in your GTD system as I would want to create a single source of truth. However, as long as you’re consistent I don’t believe there’s real harm either way.

Waiting For

As you are working through your actions you will undoubtedly encounter projects which are waiting someone else to complete an action. One of the most common reasons projects go off the rails is because a task is sat with someone and it is never followed up. This is where the additional folder we created in your email comes in useful.

Whenever you have an action which is sat with someone else tag that action with the word Waiting. I would also record the date it was delegated, the name of the person, and the date you wish to follow it up. Depending on the technology you are using you will have different mechanisms for doing this ranging from reminders and tags to simply changing the title.

Drop any corresponding emails into that new Waiting For inbox folder so those messages are always close at hand. BCC yourself into any emails you send asking for someone to pick something up and drop it in that folder!

If at all possible keep the action sat in the same project folder, this will help maintain context with the overall project.

Agendas

Agendas are one of the hidden gems of GTD. Inside your work folder (although you could possibly do it for others too) I want you to create a folder for Agendas and then others underneath for:

- Daily Standup

- Sprint Planning

- Sprint Review

- Retrospective

- 1:1 with Boss

Along with any all hands calls or mentorship sessions you take part in. These folders represent everything you want to discuss in those upcoming meetings. This means if you have an issue you need to speak about you will never have that frustraiting feeling of not being able to quite remember what it is.

This means you can gather frustrations or highlights for the retrospective throughout the week and make a list of items you want to talk to your boss about rather than having to think of them on the spot. You can also capture any items which come from either of those two meetings quickly and easily in your inbox for clarifying and organising later.

Once you’ve raised your point and got your answer you can check them off. Remember you can use Waiting For if someone doesn’t have the solution for you right away!

Next

While creating your actions is it often helpful to tag (or otherwise highlight depending on the technology you are using) the next action which is required to move a project along. I also tag my actions with Email, Phone, and/or 15Minutes to help me catagorise each item so I don’t have to jump from one application to another and can pick up quick tasks in between meetings.

Review

By now you should have a list of all the actions you have committed to and their appropriate due dates. David Allen recommends you should reseve some time each week to zero your inboxes, review your projects, next actions, waiting for lists, and agendas and I would agree.

You’re looking for anything which is out of date, irrelevant, or no longer needed. You’re also looking for the next steps to progress your projects.

I would add one add additional suggestion. Ensure you schedule your personal GTD review before your sprint ceremonies. If you don’t have a clear vision of your commitments outside the sprint then you are never going to be able to give a fair view of capactity in those planning sessions. If you know you’ve got a large task to complete for a senior manager you’re now aware of that task and can take on less work in the sprint planning session.

Engage

Now we’ve got this wonderfully organised list of work it’s time to do some! There are a number of ways for deciding what to work on:

- How long it will take

- How urgent it is

- Whether you have the right tools required (and to a degree the inclination) at that time.

Obviously the vast majority of your time will be for scrum tasks (or at least I hope it would be). However as we mentioned at the start it’s important that these other pieces are picked up and not forgotten about. I would recommend finding a certain amount of time each day to review this list and look for any actions and make sure you have plenty of time to complete them before their respective deadlines.

10 minutes at the start of the day (ideally before the daily standup) is all that’s needed to make sure you can plan effectively and you don’t miss anything which needs doing alongside your scrum deliverables.

A Note On Technology

I deliberately haven’t talked about technology in this post. The tool doesn’t make the system but it’s clear you’re going to need a good tool to keep this system working.

In his book David Allen talks a lot about Pen and Paper but also doesn’t go too much into digital tools (although personally I think he’s a little naive assuming that people won’t reach for an online tool first.

Personally I’d recommend Todoist, I find it very intuitive and reliable and they have a great GTD guide available which walks you through many of the suggestions I’ve made. However clearly there are other applications and tool available – please feel free to share any suggestions you’ve got in the comments.

In Conclusion

In this post I’ve tried to explain why using a system like GTD is so important to developers and how it can be used to handle work which the scrum process doesn’t. I’d highly recommend having a read of the Todoist GTD Guide and giving their free version a go. If you have any other suggestions on how to integrated the two processes I’d be very keen to hear them.